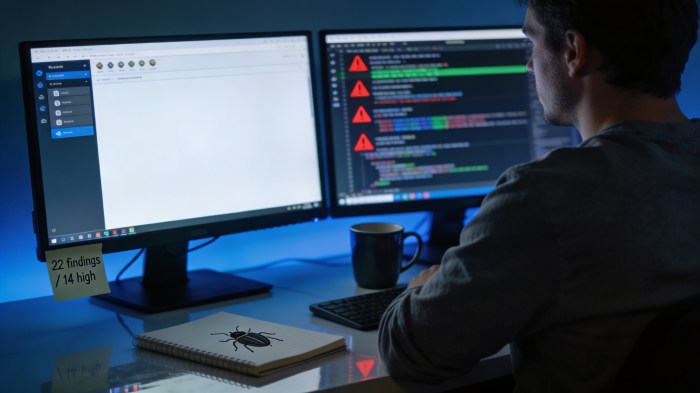

Claude AI Finds 22 Security Bugs in Firefox in Just Two Weeks, Showing AI’s Growing Role in Cyber Defense

by Suraj Malik - 16 hours ago - 5 min read

Artificial intelligence is steadily moving from experimentation to practical engineering work, and a recent collaboration between Anthropic and Mozilla illustrates that shift clearly.

In a focused security audit, Anthropic’s advanced AI model helped uncover 22 previously unknown vulnerabilities in the browser Mozilla Firefox within just two weeks, with 14 of them classified as high-severity issues.

The findings, first reported by TechCrunch, highlight how modern AI systems are beginning to assist engineers with one of the most complex tasks in software development: auditing massive codebases for subtle security flaws.

For a browser that already undergoes continuous security testing, discovering that many vulnerabilities in such a short period immediately caught the attention of developers and security researchers across the industry.

A Carefully Chosen Target: Why Firefox Was Audited

Firefox was not selected randomly.

Among modern software projects, browsers represent some of the most complex and heavily audited codebases in existence. They must safely interpret and execute unpredictable web content while maintaining strict security boundaries between websites, operating systems, and user data.

Mozilla’s browser includes millions of lines of code spanning multiple languages and subsystems. Its architecture handles tasks such as page rendering, sandboxing, networking, graphics acceleration, and JavaScript execution.

Because of this complexity, security teams often treat browsers as an ideal stress test for new vulnerability-detection tools.

If an AI system can discover meaningful issues inside a mature and widely audited project like Firefox, it suggests that the technology could help improve security across many other software ecosystems as well.

Claude Opus 4.6 Was Used for the Audit

The vulnerability discovery experiment relied on Claude Opus 4.6, one of the most capable AI systems currently developed by Anthropic.

Engineers directed the model to review parts of Firefox’s codebase, beginning with the browser’s JavaScript engine, which processes the scripts that power most modern websites.

From there, the analysis expanded to additional components within the browser.

Rather than scanning the code with traditional automated tools alone, the AI was used to reason about code structure and logic patterns. This approach allows models to identify subtle flaws that static scanners might miss, especially when issues involve unusual interactions between components.

During the two-week investigation, Claude flagged dozens of potential vulnerabilities. After verification by engineers, 22 of those findings were confirmed as real security issues.

Most Vulnerabilities Already Patched

Mozilla responded quickly to the findings.

According to the report, most of the vulnerabilities were fixed in Firefox 148, which shipped in February. A few remaining issues are scheduled to be resolved in the browser’s next update.

Because of this rapid response, users running recent versions of Firefox are unlikely to be exposed to these vulnerabilities.

Mozilla’s security workflow already includes rapid patch cycles, bug bounty programs, and collaboration with external researchers. The addition of AI-assisted auditing simply adds another layer to an already robust security pipeline.

Where AI Struggled: Turning Bugs Into Exploits

While the AI model performed well at discovering bugs, the results were very different when researchers tried to convert those bugs into working attacks.

Anthropic’s team reportedly spent about 4,000 dollars in API credits attempting to generate proof-of-concept exploits from the vulnerabilities.

Despite those efforts, only two exploit demonstrations were successfully produced.

This distinction is important. Discovering a vulnerability is only the first step in a long process that involves crafting a reliable exploit capable of bypassing security protections and running malicious code.

The experiment suggests that while AI can assist with vulnerability discovery, exploitation still requires significant human expertise and technical creativity.

Why This Matters for Cybersecurity

The broader implication of this experiment is not just the number of vulnerabilities found. It is the speed at which they were discovered.

Security audits that once required months of manual inspection could increasingly be accelerated by AI-assisted analysis.

Large software projects often contain millions of lines of code, and subtle mistakes can remain hidden for years. AI systems capable of reasoning about code may help engineers identify these problems much earlier.

For defenders, this shift could be transformative. Faster bug discovery means faster patches, fewer unreported vulnerabilities, and a reduced window for attackers to exploit weaknesses.

AI as a Defensive Tool, Not Just an Offensive One

Public discussions about AI and cybersecurity often focus on the fear that malicious actors could use AI to create sophisticated attacks.

The Firefox experiment highlights the opposite possibility: AI may strengthen defensive security faster than it empowers attackers.

The difficulty Claude faced when attempting to generate working exploits suggests that weaponizing vulnerabilities still requires specialized knowledge and deep understanding of system internals.

That gap currently slows the misuse of AI-discovered vulnerabilities.

For security teams, however, the benefits are immediate. AI can help prioritize risky sections of code, suggest potential flaws, and assist human researchers in reviewing enormous projects more efficiently.

The Emerging Role of AI in Software Engineering

The collaboration between Anthropic and Mozilla reflects a broader trend across the technology industry.

AI models are no longer limited to writing text or generating images. They are increasingly being tested in engineering workflows, including debugging, documentation, testing, and security analysis.

Companies developing complex software may soon integrate AI auditing systems directly into development pipelines. These systems could continuously analyze new code commits, flag potential vulnerabilities, and assist engineers before software ever reaches production.

For open-source projects like Firefox, which rely on global communities of contributors, such tools could significantly enhance security while reducing the burden on human reviewers.

A Glimpse Into the Future of AI-Assisted Security

The Firefox experiment does not mean AI can replace security researchers. Instead, it suggests a future where human expertise and machine analysis work together.

AI can scan vast codebases and highlight suspicious patterns, while experienced engineers investigate those findings and determine whether they represent real vulnerabilities.

That partnership may become one of the most important defenses in modern cybersecurity.

If the early results from this collaboration are any indication, the next generation of software security could be shaped as much by AI assistants as by human experts.