NVIDIA Unveils Rubin Architecture: A Generative Leap Forward in AI Supercomputing at CES 2026

by Parveen Verma - 2 months ago - 3 min read

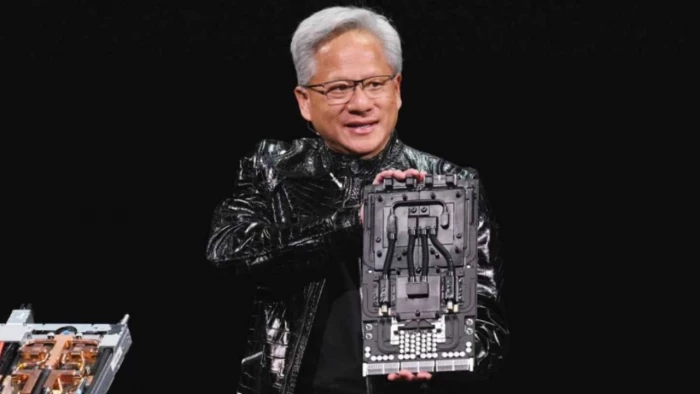

In a move that signals the dawn of a new era for artificial intelligence, NVIDIA has officially lifted the curtain on its next-generation "Rubin" chip architecture during a keynote at CES 2026. This announcement, delivered by CEO Jensen Huang, marks a pivotal shift from the previous Blackwell generation, introducing what the company describes as the world’s first "extreme-codesigned" six-chip AI platform. Named in honor of the pioneering astronomer Vera Rubin, the new architecture is meticulously engineered to handle the staggering computational demands of agentic AI and deep reasoning models, effectively redefining the data center as a high-efficiency “AI factory.”

The technical specifications of the Rubin GPU are nothing short of monumental. Built with an staggering 336 billion transistors, the flagship processor delivers up to 50 petaflops of AI inference performance using the new NVFP4 precision a fivefold increase over the Blackwell architecture. Training capabilities have also seen a massive surge, with the Rubin GPU clocking 35 petaflops, representing a 3.5x improvement. Beyond raw speed, the architecture integrates HBM4 memory for the first time, providing a colossal 22 terabytes per second of aggregate bandwidth. This leap ensures that even the most massive Mixture-of-Experts (MoE) models can operate without the memory bottlenecks that have previously constrained large-scale AI deployments.

At the heart of this platform is the Vera CPU, a custom-designed processor featuring 88 "Olympus" ARM cores. Unlike traditional general-purpose CPUs, Vera is purpose-built for high-speed data movement and agentic reasoning. When paired with the Rubin GPU through the doubled-bandwidth NVLink-C2C interconnect, the resulting "Vera Rubin Superchip" creates a unified compute engine that functions with unprecedented synchronicity. This synergy allows the new Vera Rubin NVL72 rack-scale system to act as a single, coherent supercomputer, boasting 260 terabits per second of networking bandwidth a figure NVIDIA notes is higher than the total traffic of the entire internet.

One of the most significant breakthroughs introduced with Rubin is the focus on "Inference Context Memory Storage." As AI models evolve into agents that can think and reason over long periods, the management of "KV cache" (context memory) becomes critical. NVIDIA’s new storage platform, powered by the BlueField-4 data processing unit, creates a specialized memory tier that accelerates agentic reasoning by 5x while dramatically reducing costs. NVIDIA claims that the Rubin platform can reduce the cost of generating AI tokens to just one-tenth of previous generations, a metric that will likely accelerate the democratization of high-level AI across industries.

The industry response has been immediate and widespread. Tech giants including Microsoft, AWS, and Google Cloud have already committed to integrating Vera Rubin systems into their next-generation "AI superfactories." Microsoft’s Fairwater project is expected to scale to hundreds of thousands of these superchips to power its most advanced reasoning models. While mass production was originally anticipated for later in the year, Jensen Huang confirmed that the Rubin architecture has entered full production ahead of schedule in early 2026, with customer shipments slated to begin in the second half of the year. This relentless innovation cycle moving from Blackwell to Rubin and already eyeing the future "Feynman" architecture solidifies NVIDIA's role as the primary architect of the global AI infrastructure.