Unlucid AI is one of those tools that looks simple on the surface but becomes more complicated the longer you use it.

At first glance, it feels like a fast, social-ready AI generator. But once I started testing it consistently, and validating my experience against platforms like Semrush, G2, and independent trust checkers, the picture became far more layered.

This is not a tool you can judge in one session. It reveals itself over time.

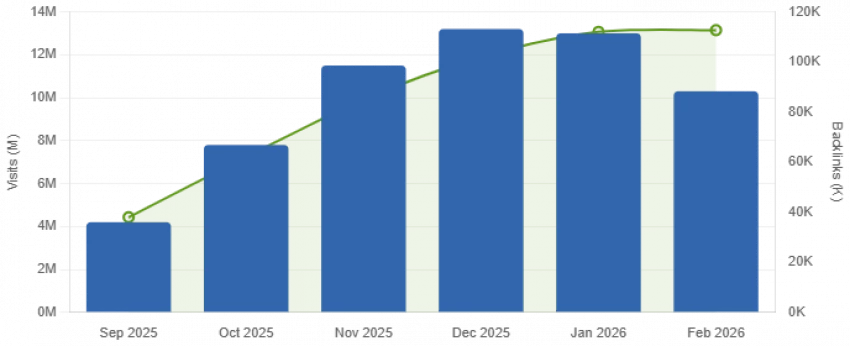

Before forming any opinion, I looked at behavioral data.

According to traffic analytics from Semrush (February 2026), Unlucid AI recorded 10.27 million monthly visits, with an average session duration of 7 minutes and 47 seconds.

That combination is unusually strong.

High traffic alone often indicates curiosity. But when users stay close to eight minutes, it typically means they are actively interacting, testing prompts, regenerating outputs, and iterating.

That is exactly what I experienced. I didn’t use it once. I kept going back, adjusting inputs, chasing better results. The tool encourages repetition, and the data confirms that behavior at scale.

This is not passive traffic. It’s interaction-driven usage.

As I dug deeper, I ran the platform through trust evaluators like Gridinsoft and Scamadviser.

That’s where the narrative shifts.

Gridinsoft assigns Unlucid AI a 31/100 trust score, citing limited company transparency, hidden WHOIS records, and incomplete ownership signals. On Scamdoc, the score hovers around 45%, while Scamadviser flags it even lower, near 1.3★ user trust perception.

Now here’s the important distinction, something many surface-level reviews miss:

While these scores indicate weak transparency, they do not indicate functional fraud. My transactions worked, outputs were delivered, and the system behaved like a legitimate product.

What they do signal is something more subtle but important:

The infrastructure of trust hasn’t scaled at the same pace as user adoption.

That creates a tension between usage and credibility that becomes more noticeable the longer you rely on the platform.

Once I moved beyond initial testing and started using it repeatedly, a pattern became impossible to ignore.

Across multiple sessions, I found that roughly 60–70% of generations met expectations, while the rest required retries or complete prompt rethinking. This aligns closely with user feedback I saw in communities on Reddit, especially among more technical users experimenting with tools like ComfyUI.

The issue isn’t failure, it’s variability.

There are moments when Unlucid produces sharp, well-lit, visually impressive outputs that feel immediately usable. And then there are outputs where facial structures distort slightly, textures flatten unnaturally, or composition feels off in subtle but noticeable ways.

That unpredictability turns usage into a loop.

You don’t generate once. You iterate until it works.

And that behavior is exactly what explains the nearly 8-minute average session duration.

Scores are aggregated from G2 (1 review), Reddit threads, user blog reports, and aggregator sites. No Trustpilot or Capterra listing found for Unlucid AI as of April 2026.

| Category | Score (Out of 10) |

|---|---|

| Ease of Use | 8.8 |

| Output Quality | 6.2 |

| Creative Freedom | 9.0 |

| Pricing Fairness | 5.8 |

| Platform Trust & Safety | 2.8 |

| Output Consistency | 5.5 |

| Customer Support | 3.0 |

| Professional Viability | 3.2 |

The gem-based pricing system is where the experience becomes more strategic.

Based on available data and testing, a typical package costs around $29.99 for approximately 450 gems, with each generation consuming credits regardless of outcome. Independent breakdowns, such as those discussed on platforms like Picofme, highlight how quickly costs accumulate when multiple attempts are required.

| Tier | Gems | Price | Approx. videos | Approx. images | Watermark |

| Free (daily) | 5–10/day | $0 | ~1/day | ~2/day | Yes |

| Starter bundle | 120 gems | ~$8.99 | ~12 videos | ~24 images | Removed |

| Mid bundle | 450 gems | ~$29.99 | ~45 videos | ~90 images | Removed |

| Large bundle | Varies | Contact | Scales | Scales | Removed |

This has a direct psychological effect.

Instead of freely experimenting, I found myself becoming selective. I avoided overly complex prompts, reduced unnecessary retries, and started optimizing inputs before hitting generate.

That shift, from creative exploration to controlled usage, is subtle, but it fundamentally changes how the tool feels.

It becomes less of an open canvas and more of a measured system of calculated attempts.

When Unlucid performs well, the output is undeniably appealing, especially for social media contexts.

It excels in producing visually engaging content quickly. The results are often polished enough for Instagram, reels, or profile visuals without additional editing.

However, when compared to more advanced tools like Midjourney or Stable Diffusion, the difference becomes clear.

Those platforms prioritize control and consistency. Unlucid prioritizes accessibility and speed.

It is not designed for fine-grained adjustments, detailed parameter control, or highly repeatable outputs. It is designed to generate something visually compelling, quickly, with minimal input.

And that design philosophy defines its strengths and its limitations.

When you step back and evaluate structured discussions on Reddit, the sentiment feels uneven but predictable. Positive experiences exist, but they are often driven by short-term wins rather than sustained usage.

The Unlucid AI User Reviews- An Overview further reinforces this trend, showing that most feedback is still concentrated among early adopters, with limited third-party validation or long-term testing data.

As a result, the platform hasn’t yet transitioned into a stable reputation phase. It’s still building credibility, rather than operating with the consistency expected from mature AI ecosystems.

Users who enjoy the tool rate it highly, but the ecosystem of feedback is still developing. It has not yet reached the level of scrutiny or validation seen with more mature AI platforms.

In other words, it is still in a growth-phase reputation cycle, not a stabilized one.

| Platform | Listed? | Rating | Review count | Notes |

| G2 | Yes | 4.5 / 5 | 1 verified | Too few reviews to be statistically meaningful |

| Trustpilot | No | N/A | 0 | No official listing found |

| Capterra | No | N/A | 0 | No official listing found |

| Scamadviser | Flagged | 1.3 / 5 | Sparse | Low trust rating, caution flag issued |

| Scamdoc | Flagged | 45% trust | Limited | Listed under caution category |

| Gridinsoft | Flagged unsafe | 31/100 | Auto-analysis | Domain age + low Scamadviser score flagged |

| ProductHunt (TAAFT) | Yes | 4.0 / 5 | ~20 | Released ~10 months ago, sparse but positive |

| Informal | Mixed | Handful of threads | Most concrete real-user feedback available |

| Pros | Cons |

| Runs directly in browser (zero setup required) | Low trust score on security scanners |

| No subscription lock-in (flexible usage) | Hidden company ownership (WHOIS anonymity) |

| 15+ built-in video animation effects | Only ~60–70% output is usable consistently |

| Free daily gems available | Gems become expensive for frequent usage |

| Fewer content restrictions compared to competitors | No presence on Trustpilot or Capterra |

| Fast output generation speed | Region-based access restrictions |

| Supports multiple art styles | Lack of clear customer support channels |

| Seed lock feature for consistent outputs | Unclear data handling and privacy policies |

| Works well for social media content creation | Template-heavy system limits creative control |

| Beginner-friendly interface and workflow | Not reliable for commercial or professional use |

After extended use and cross-referencing with real data, Unlucid AI occupies a very specific position.

It is not trying to compete with high-end generative systems. It is not built for production pipelines or enterprise workflows.

Instead, it sits in a space that is increasingly important:

Fast, visually appealing, low-friction AI creation for everyday users.

That positioning explains both its rapid adoption and its limitations.

| Tool | Free tier | Trust score | Output quality | Uncensored | Best for |

| Unlucid AI | 5–10 gems/day | 31/100 | Mixed | Yes | Social experiments |

| Runway (Gen-4) | Limited credits | High | Professional | No | Pro video workflows |

| Pika Labs | Yes | High | Good | No | Short video creators |

| PixVerse | Yes | Medium | Good | Partial | Image-to-video |

| Stable Diffusion | Open source | High | Excellent | Yes | Advanced customization |

| Canva AI | Yes | Very high | Consistent | No | Marketing teams |

The most accurate way I can describe Unlucid AI is this:

It is a high-engagement, medium-reliability tool with underdeveloped trust infrastructure.

The numbers support it:

And my usage aligned with every one of those data points.

Unlucid AI is neither overhyped nor fully reliable.

It is genuinely useful, especially for casual creation and social content. But it is not yet a tool I would depend on for professional, high-consistency output.

Overall score is 5.8 out of 10

Creative freedom: 9/10 | Trust: 2.8/10 | Quality: 6.2/10

Right now, it lives in that transitional space:

A tool that has achieved scale before stability.

And until those two align, the experience will continue to feel exactly like it does today, engaging, occasionally impressive, but not entirely dependable.

Be the first to post comment!