| TL;DR — Perplexity wins for fact-finding, citations, and literature review. ChatGPT wins for drafting, synthesis, and brainstorming. The smartest researchers in 2026 use both, and we'll show you exactly how. |

Picture this: you submit a meticulously researched paper at 2 a.m., proud of the twelve "peer-reviewed" citations you pulled from an AI chatbot in record time. A week later, your professor flags seven of them as fabricated, papers that don't exist, by authors who never wrote them, in journals that never published them.

It's not a horror story. It's a documented pattern.

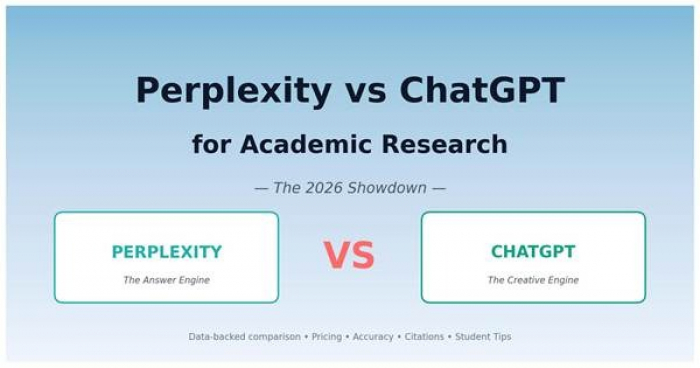

A 2025 Deakin University study found that roughly 56% of citations generated by ChatGPT (GPT-4o) in mental-health literature reviews were either fake or contained errors, and about 1 in 5 were outright fabrications. Even more striking: GPTZero's January 2026 analysis of 4,841 papers accepted at NeurIPS 2025, one of the world's most rigorous AI conferences, uncovered at least 100 hallucinated citations across 53 papers, slipping past 3–5 peer reviewers each.

Meanwhile, on the other side of the AI ring, Perplexity has quietly become the darling of grad students and journalists. Why? Because every claim it makes comes with a numbered, clickable source. No more guessing. No more fake DOIs. Just receipts.

So here's the real question we're going to answer in the next 10 minutes: For academic research in 2026, which tool actually deserves a spot in your workflow, Perplexity, ChatGPT, or both?

Before we dive into the head-to-head, let's look at the numbers shaping this conversation.

The six stats that define the AI research landscape in 2026

These six stats set the stage. Perplexity is now processing over 1.2 billion queries a month (up from 780 million in May 2025, per CEO Aravind Srinivas), with students its fastest-growing user segment. ChatGPT, on the other hand, sits on 100+ million weekly active users and a near-monopoly on creative writing tasks. Roughly 70% of academic researchers report using ChatGPT for some part of their workflow, but most also report being burned by hallucinated content at least once.

The headline numbers tell you these are two giants. But for academic work, the type of help each one provides is fundamentally different, which brings us to the snapshot every researcher needs.

| Feature | Perplexity | ChatGPT |

| Core purpose | AI-powered answer engine | Conversational AI assistant |

| Default behavior | Searches the live web, then answers | Generates from training data; can browse if enabled |

| Citations | Numbered, inline, clickable — by default | Optional; often missing or incomplete |

| Hallucination risk | Low (errors visible via sources) | Medium-to-high (errors look confident) |

| Academic mode | Yes — peer-reviewed sources only | No dedicated mode |

| Real-time data | Native | Via browse tool only |

| Creative writing | Decent | Best in class |

| Deep reasoning / coding | Good | Best in class |

| Conversational memory | Limited | Strong (Projects, custom GPTs) |

| Free tier | Generous — 5 Pro Searches/day | Generous — GPT-5 lite access |

| Pro tier (monthly) | $20 | $20 |

| Student price | $5/month (Education Pro via SheerID) | No dedicated student tier |

| Best for | Literature review, fact-checking, current events | Drafting, brainstorming, problem-solving |

The pattern jumps right off the page: Perplexity is the librarian; ChatGPT is the co-author. Both are useful, but for very different stages of a research project.

To understand why, you need to see what's actually under the hood of each tool.

Launched in late 2022, Perplexity was built from day one as a retrieval-first AI. When you ask it a question, it doesn't just guess from memorized training data, it searches the live web, pulls 10–20 sources, and synthesizes them into a response with numbered citations next to every claim. Click any citation and you're taken straight to the original article, paper, or PDF.

For academic work, three features matter most:

ChatGPT, by contrast, is OpenAI's conversational AI assistant. It's a generation-first tool: its core strength is producing fluent, structured, creative text from a vast neural network trained on a snapshot of the internet. Web browsing was bolted on later, and while it works, it's not the same architecturally as Perplexity's retrieval-native approach.

Where ChatGPT shines for academics:

Two different design philosophies, two different sweet spots. But the question every researcher cares most about isn't features, it's whether the tool can be trusted. Let's go there next.

This is where Perplexity has built its reputation, and where ChatGPT keeps tripping over its own confidence.

The hallucinated-citation problem, visualized

The data is striking. According to the Deakin University study, 56% of ChatGPT's academic citations contained errors or were entirely fabricated, with 64% of fake citations linking to real but completely unrelated papers, making the errors harder to catch, not easier. Subject matter mattered too: depression citations were 94% real, but binge eating disorder citations had fabrication rates near 30%.

Perplexity isn't perfect either, but the difference is structural. In testing across 120 factual queries spanning history, science, current events, and math:

• Perplexity's Quick Search: 91% accurate

• Perplexity's Pro Search: 94% accurate

• ChatGPT (free, no browsing): 18% confident errors with no sources

The most important point isn't even the raw accuracy number. It's that Perplexity's citation model makes errors visible. If a claim is wrong, you can click the source, see for yourself, and catch the mistake in 10 seconds. With ChatGPT, a fabricated citation looks identical to a real one, same DOI format, same plausible journal, same convincing author names.

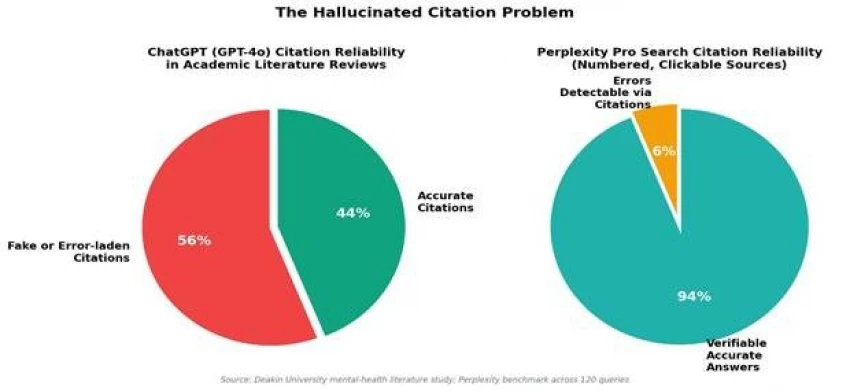

Here's a visual side-by-side of how the two stack up across the accuracy metrics that actually matter for academic work:

Accuracy across 4 metrics that actually matter for academic work

Perplexity leads across every accuracy dimension — but most dramatically in citation accuracy (94% vs. 44%) and source transparency (98% vs. 52%). Those two metrics alone are why universities and journals are increasingly recommending Perplexity for source discovery while explicitly warning students against trusting ChatGPT's citations.

Accuracy is the foundation. But features decide whether a tool can actually fit into your research workflow, and that's where the picture gets more interesting.

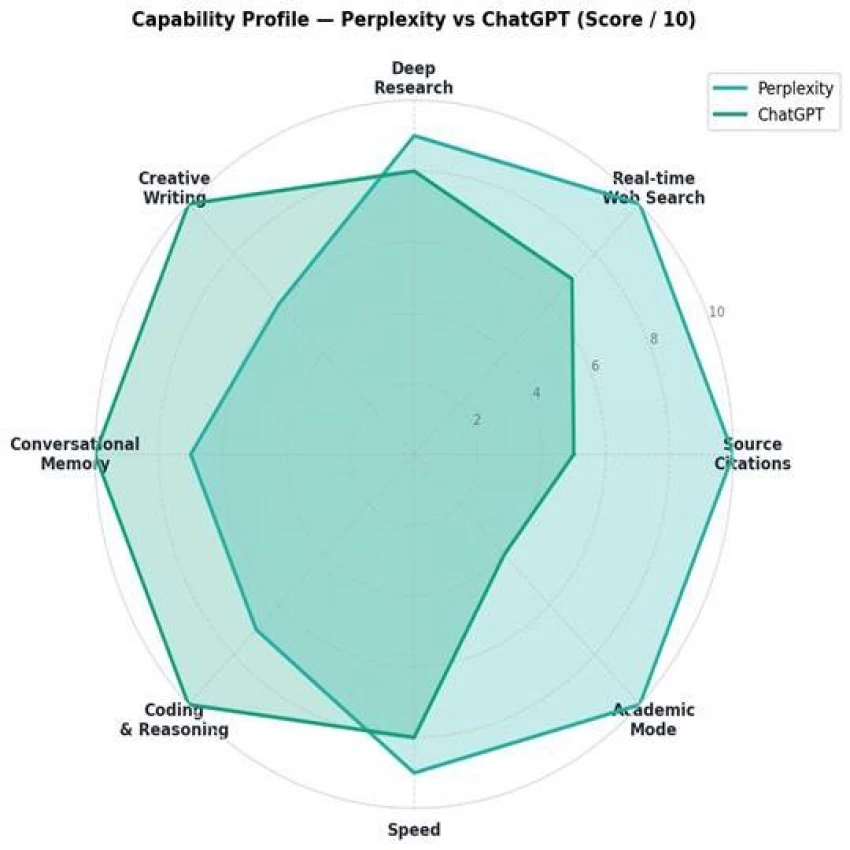

Citations get the headlines, but a tool you use every day needs more than just trustworthy sources. Here's how the two compare across the eight capabilities that matter most for academic work:

Capability profile across 8 dimensions (scored out of 10)

| Capability | Perplexity | ChatGPT | Winner |

| Source citations | Native, every answer | Sometimes, with browsing | Perplexity |

| Real-time web search | Native architecture | Bolt-on tool | Perplexity |

| Deep research mode | Pro Search + Research Lab | Deep Research GPT | Tie |

| Creative writing | Decent | Best-in-class | ChatGPT |

| Conversational memory | Limited threads | Projects + cross-chat memory | ChatGPT |

| Coding & reasoning | Good (GPT-4o/Claude under hood) | Native, strongest available | ChatGPT |

| Speed (single answer) | Fast | Fast | Tie |

| Academic Focus mode | Peer-reviewed only filter | Not available | Perplexity |

The take-away? Perplexity's radar profile is spiky, it dominates the research-relevant axes (citations, web search, academic mode) but lags on creative output. ChatGPT's profile is well-rounded — it's a generalist that handles almost any task respectably but doesn't lead on source transparency.

For a student writing a literature review, that spiky profile is exactly what you want. For a student drafting a 5,000-word essay or wrestling with a Python script for data analysis, ChatGPT's all-rounder shape is the better fit.

Features are great , but they only matter if you can actually afford the tool. Let's look at the pricing.

Both tools advertise their Pro tier at $20/month. But the real story is in the discounts, free tiers, and student programs.

Pricing tiers compared — Perplexity vs ChatGPT (2026)

Here's the cleaner breakdown:

| Tier | Perplexity | ChatGPT |

| Free | 5 Pro Searches/day + unlimited Quick Search | GPT-5 lite + limited browsing |

| Student (verified) | $5/month via SheerID (Education Pro) | None — pay full price |

| Pro / Plus (monthly) | $20 — unlimited Pro Search, multi-model (GPT-4o, Claude Opus, Gemini), file uploads, $5 API credit | $20 — full GPT-5, image generation, voice, custom GPTs |

| Annual equivalent | ~$16.60/month | ~$16.60/month |

| Team / Enterprise | $40/user/month (privacy-focused) | $25/user/month (Team) |

| Power tier | Perplexity Max — $200/month | ChatGPT Pro — $200/month |

So you've seen accuracy, features, and price. But how does this actually play out in a real assignment? Let's walk through the most common academic tasks.

| Academic Task | Better Tool | Why |

| Finding peer-reviewed sources | Perplexity | Academic Focus filters to scholarly databases; citations are clickable |

| Writing a literature review | Perplexity → ChatGPT | Use Perplexity to gather + cite, ChatGPT to weave the narrative |

| Drafting an essay or thesis chapter | ChatGPT | Better long-form coherence, tone control, and editing |

| Brainstorming research questions | ChatGPT | Conversational depth and follow-up reasoning |

| Fact-checking your own draft | Perplexity | Cross-references claims against live, cited sources |

| Summarizing a 50-page PDF | ChatGPT (Projects) | Stronger context handling for long documents |

| Tracking the latest research (2025–2026) | Perplexity | Real-time web + current-date awareness |

| Coding a statistical analysis | ChatGPT | Best-in-class reasoning, debugging, and code generation |

| Generating practice questions / flashcards | ChatGPT | Conversational tutoring style works better |

| Verifying a fact you're not sure about | Perplexity | One search, one citation, done |

If you read that table carefully, you'll notice something interesting: the winners alternate. Perplexity owns the discovery and verification phases. ChatGPT owns the synthesis and production phases. They're not really competitors, they're complements.

Which leads us to the workflow most experienced researchers have quietly adopted.

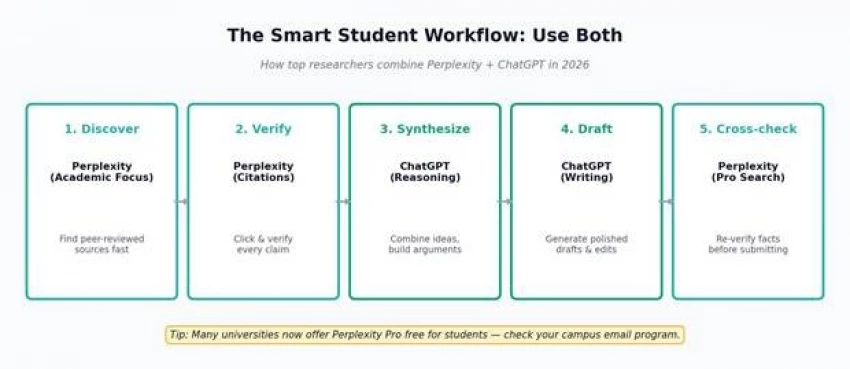

The 5-stage workflow that combines Perplexity + ChatGPT

Here's the 5-stage process that consistently outperforms using either tool alone:

This workflow takes the strengths of both tools and avoids their weaknesses. You get Perplexity's source-grounded honesty and ChatGPT's creative fluency — without trusting either one blindly.

But what if you can only pick one? Here's the honest verdict.

If your budget is tight and you have to pick exactly one:

There's no universal winner, there's only the right tool for the stage of work you're in. The mistake most students make isn't picking the wrong tool. It's picking one tool and trying to force it into every job.

For research: Perplexity (Academic Focus + Pro Search) For writing: ChatGPT (Projects + custom GPTs) For verification: Always Perplexity — always For brainstorming: ChatGPT For tight budgets: Perplexity Education Pro at $5/month For never getting caught with a fake citation: Use both — verify everything |

The era of "one AI to rule them all" is already over. The students winning in 2026 aren't the ones who picked the right tool, they're the ones who learned how to combine them.

Open Perplexity in one tab. Open ChatGPT in another. Get to work.

Share your thoughts about this article.

Be the first to post a comment!